Lately, social media, television channels, and visual content platforms all over the world have been identifying a growing demand for subtitles. Yet, until today, the main obstacle to a more extensive display of subtitles has been the high costs associated with it. A combination of costly specialized equipment and limitations of personnel has made it difficult for most companies to rise to the challenge of this rapidly growing demand of the public.

Due to some particular features of Japanese society that we will explore below, Japan is one of the countries in which this demand has been growing faster. But while major broadcasters can leverage their resources to include subtitles in most of their programs, local stations, that play a very important role in the communities they serve, lack the financial conditions to do it.

Luckily, innovative technologies are now paving the way for more inclusive content.

Why Japan? Hearing disability and the need for subtitles

Japan is the country with the highest percentage of elderly adults in the world. Currently, the Japanese Hearing Aid Industry Association estimates that as many as 14.7 million people -which is more than 10% of the country’s population- suffer from some level of hearing disability.

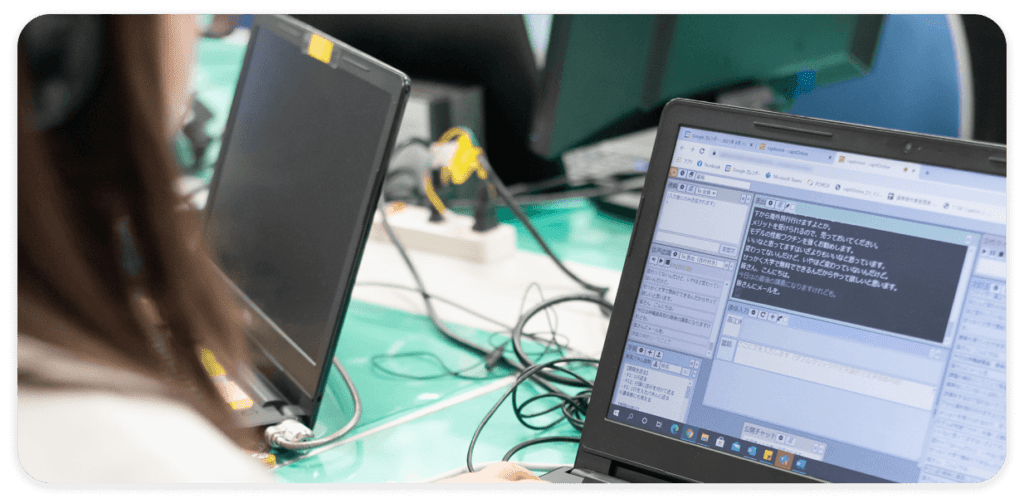

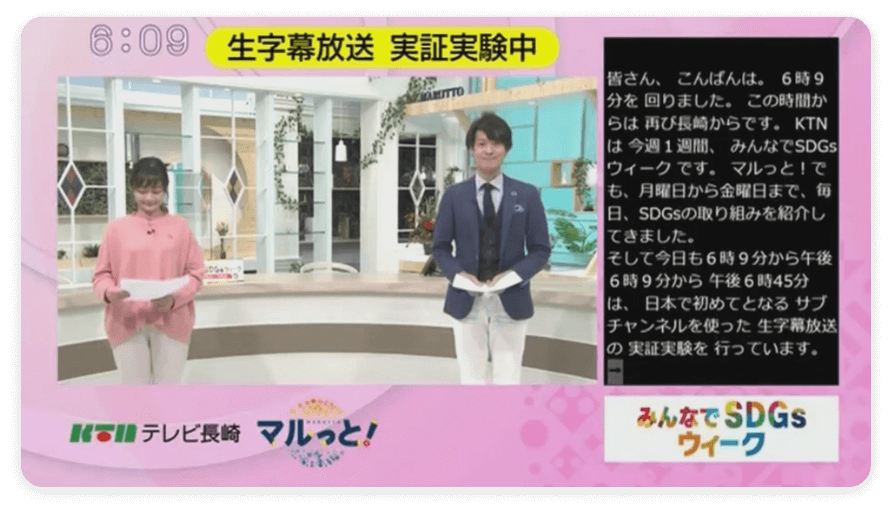

To address the increasing need for subtitles, an innovative Japanese startup named SI-com, associated with a bigger company called ISCEC Japan, has been piloting an AI-powered system to provide subtitles for live broadcasting. To achieve this, they created AI-Mimi, which combines Microsoft Azure Cognitive Service with human guidance to create accurate and fast subtitles for local station programs. The innovation provided by these Japanese software companies has gained wide recognition, obtaining a Microsoft AI for accessibility grant.

After receiving and analyzing extensive user feedback, SI-com took a step forward to improve subtitles readability for the public. As a result of their research, subtitles are now displayed in 10 lines on the right side of the screen, instead of the traditional 2 lines below. Also, a bigger font is being used to make it easier for users to read. This technology was shown to the public for the first time at a Nagasaki local station, in December 2021, with an excellent response.

The right technology for a demand that is likely to grow

Ensuring accessible experiences for local communities has been the goal of SI-com and ISCEC from the start. But, going back to the beginning of this article, the technology they created is likely to become more widely used in the upcoming years.

Aside from the growing demand for subtitles, we should take into account the fact that, as time goes by, almost every developed country in the world will likely end up having a percentage of elderly adults closer to the one Japan has today. This means that more people will have hearing limitations, increasing the importance of subtitles as a way to make content more inclusive.

Many of us are bound to suffer from some sort of hearing limitation at some point. So we must be thankful for the aid of these intelligent machines that will make the news, series, and movies we’ll consume in our old age more accessible and enjoyable.

To learn more about AI and the latest technological innovations, keep reading our blog and our AI Software Development Services section.